Educational programs are not being held accountable for long-term impact. This is a problem. The current edtech market is not set up to expect accountability at scale, but rather to hop from fad to fad. This makes it especially challenging for education leaders to make wise choices, and it inhibits innovation and continuous improvement by program developers.

Let’s take a look at three aspects of the problem, which feed into each other:

All of the above conspire to inhibit continuous improvement. Currently, educational programs’ here-today, gone-tomorrow claims about usability, effectiveness or even pedagogical features are similar, loud, confusing and lack a basis for validation. We need a better path for:

At MIND Research Institute, we are taking advantage of a uniquely effective program and a philanthropically-powered adoption track record to show another way. We believe educational leaders should always be provided sufficient evidence to reasonably predict program impact at scale, over the long run, for their specific mix of students and teachers.

So what does it look like to provide schools and districts with repeatable, predictable, long-term impact for all groups of students? This is a question our school partners, teachers and students have been answering, at scale, over the last eight years.

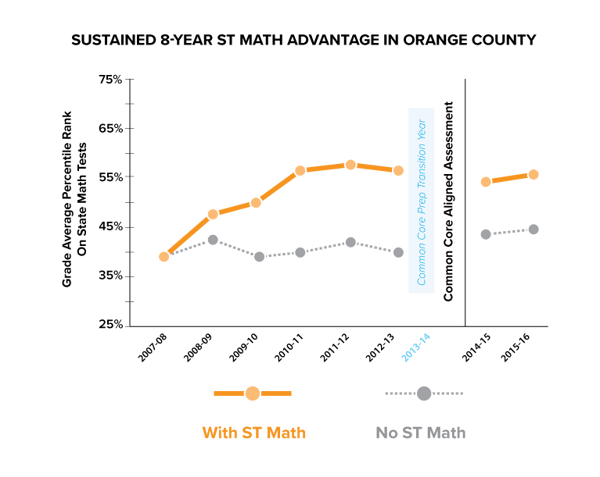

The partnership of ST Math© and Orange County schools is a proof of concept that shows the impact an effective program can have on a diverse county of 3.5 million. We are proud of the results our great district partners have achieved in fidelity of program use at scale, in engaging diverse student subgroups and in state test score advantages, evaluated consistently over an eight year period.

The ST Math (Spatial Temporal Math) instructional program was created by neuroscientists and mathematicians at the University of California, Irvine. The program offered a uniquely visual way to explore and see how math works at a conceptual level, avoiding the confusion of unfamiliar words and symbols. It was very different from any traditional math content, and thus had a chance to work better than merely digitizing traditional math approaches. And indeed, time has shown that it works at scale .

But before any large scale studies, only brain-based learning devotees and rare visionary principals—one school at a time—would give ST Math a try. In the huge region of Orange County, Calif., only a handful of new schools were adopting each year. Fortunately, those adoptions achieved grade-wide use, the teachers loved ST Math and the program was implemented with fidelity. But organic growth wasn't happening.

Even though the early adopters achieved great results on schoolwide tests, adjacent principals attributed their success to unique leadership or a high performance staff that couldn't be replicated elsewhere.

Crucially, there weren't enough schools using ST Math to generate credible, rigorous statistical studies—to provide the evidence of how the program could impact all teachers and students in the county.

As a non-profit, we decided to approach local business and community partners to help proactively spread this program to schools who needed help with math. These visionary philanthropists, intrigued by the early results, said yes. Thus in Orange County began our ST Math School Grants program, now nationwide.

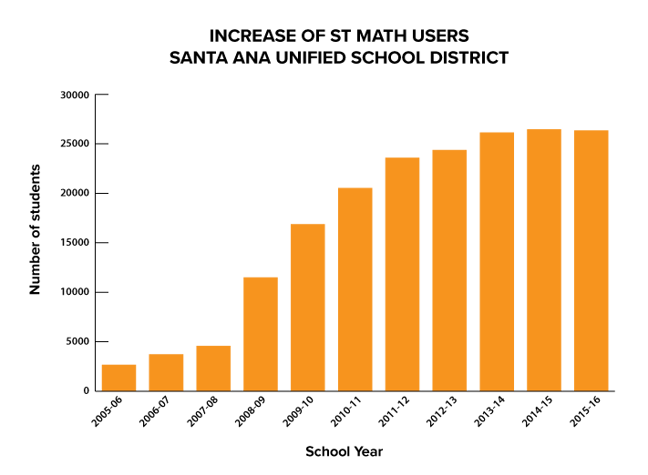

Our partners eventually contributed the grant support to spread ST Math to over 100 local low-performing schools within a two year span. Because of the results they saw in their classrooms, these districts opted with their own budgets to continue the program after the end of the first grants-supported year. Since then, over 95% of grantee schools have continued to use the program for seven to eight years.

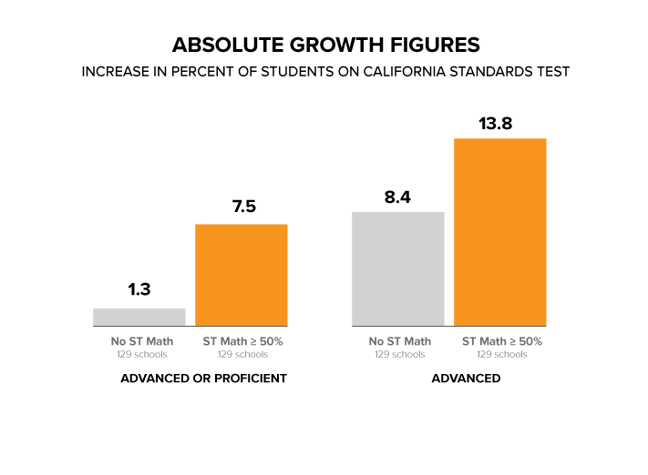

The sheer number of participating schools, combined with long-term use, enabled annual statistical evaluations of published school test score data, showing a robust and consistent test score signal well above the noise; roughly a 5-8 point advantage in percent of students proficient at math in each grade, each year.

Note that this advantage applied to every level of student—more of our struggling students moved to proficient, and importantly, more of those already proficient moved up to advanced.

This initial grants program, limited to struggling Title I schools, ended in 2012. But the ability the program demonstrated to help students move into advanced math proficiency, as well as its uniquely different visual nature, continued to attract adoption and eventually reached the district's large suburban districts.

In 2015, on the new, much harder CASSPP tests, the high-performing districts using ST Math also outperformed similar districts without ST Math.

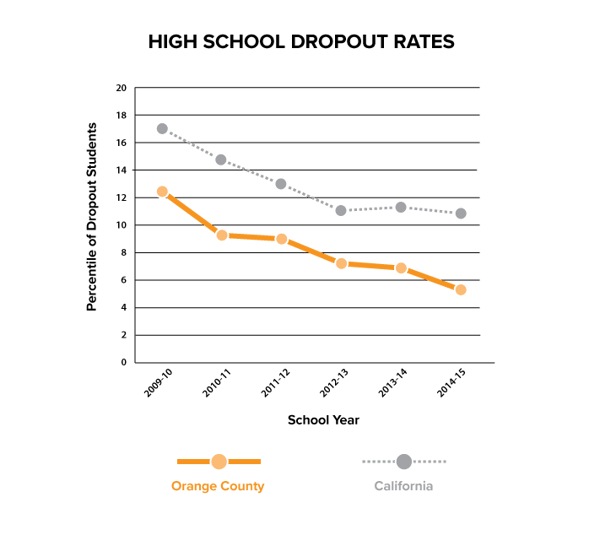

The program currently builds foundational math understanding from kindergarten onwards. By 2016, the fact that it has had such a broad reach and long-term adoption in Orange County since 2008 means there has been enough time for a large number of primary students to work their way through the end of the K-12 pipeline, potentially affecting graduation rates.

This offers validation of the original ST Math School Grants program goal: that the long-range social investment by the original philanthropic partners in 2008 would see an improved workforce pipeline, delivering strong mathematical problem solvers into the county who will become productive employees, innovators and community leaders.

Our research and studies on the results of using ST Math in large regions over long periods of time continue to reveal the program’s powerful impact on math education.

It is the collective responsibility of all educational program providers to share these results with authenticity, transparency and clarity. This is our leadership model in providing educators with what they need to evaluate and choose effective programs.

Andrew R. Coulson, Chief Data Science Officer at MIND Research Institute, chairs MIND Education's Science Research Advisory Board and drives and manages all research at MIND enterprises. This includes all student outcomes evaluations, usage evaluations, research datasets, and research partnering with grants-makers, NGOs, and universities. With a background in high-tech manufacturing engineering, he brings expertise in process engineering, product reliability, quality assurance, and technology transfer to edtech. Coulson holds a bachelor's and master's degree in physics from UCLA.

Comment